Andrej Risteski

|

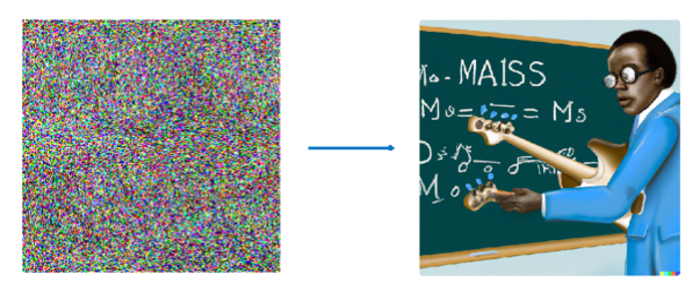

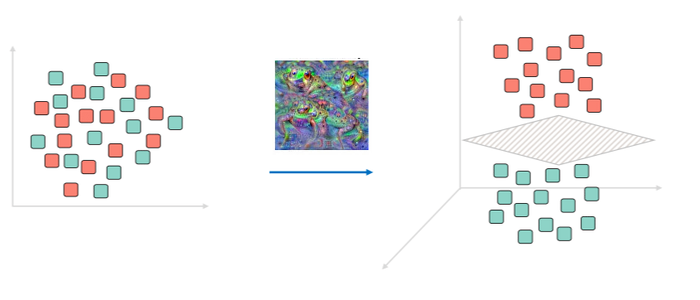

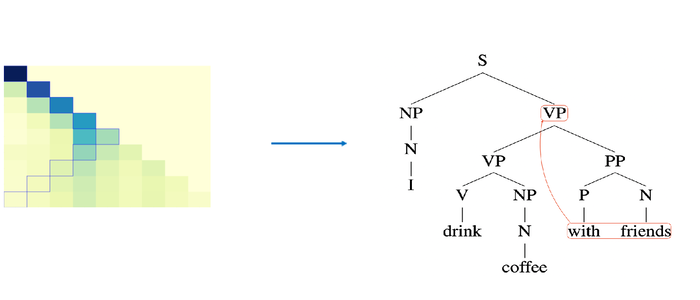

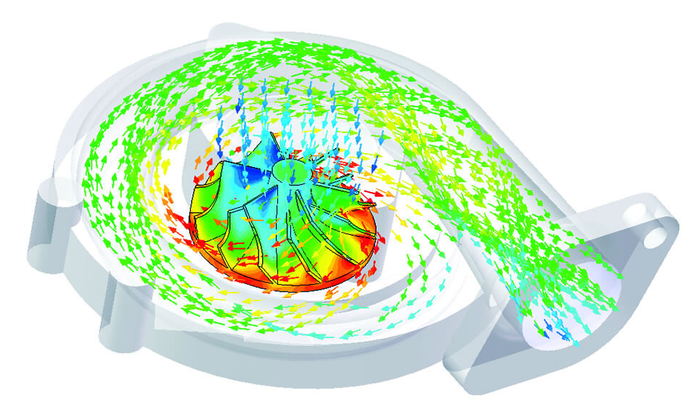

I am an Assistant Professor at the Machine Learning Department in Carnegie Mellon University. Prior to that, I was a Norbert Wiener Research Fellow jointly in the Applied Math department and IDSS at MIT. I received my PhD in the Computer Science Department at Princeton University under the advisement of Sanjeev Arora. My research interests lie in the intersection of machine learning, statistics, and theoretical computer science, spanning topics like (probabilistic) generative models, algorithmic tools for learning and inference, representation and self-supervised learning, out-of-distribution generalization and applications of neural approaches to natural language processing and scientific domains. I am the receipient of an Amazon Research Award ("Causal + Deep Out-of-Distribution Learning") and an NSF CAREER Award ("Theoretical Foundations of Modern Machine Learning Paradigms: Generative and Out-of-Distribution"). I am also in part supported by NSF award IIS-2211907 ("Foundations of Self-Supervised Learning Through the Lens of Probabilistic Generative Models"). The easiest way to reach me is email. My address is aristesk at andrew.cmu.edu |