95-865: Unstructured Data Analytics

(Spring 2024 Mini 4)

Lectures:

Note that the current plan is for Section B4 to be recorded.

- Section A4: Mondays and Wednesdays 5pm-6:20pm, HBH 1206

- Section B4: Mondays and Wednesdays 3:30pm-4:50pm, HBH 1206

Recitations (shared across Sections A4/B4): Fridays 5pm-6:20pm, HBH A301

Instructor: George Chen (email: georgechen ♣ cmu.edu) ‐ replace "♣" with the "at" symbol

Teaching assistants:

- Hao Hao (email: haohao ♣ andrew.cmu.edu)

- Grace Eunji Kim (email: eunjik ♣ andrew.cmu.edu)

- Ellen Song (email: yuhans2 ♣ andrew.cmu.edu)

- Siyu Tao (email: siyutao ♣ andrew.cmu.edu)

- Bochen Wang (email: bochenw ♣ andrew.cmu.edu)

- Michelle Wang (email: manqiaow ♣ andrew.cmu.edu)

Office hours (starting second week of class): Check the course Canvas homepage for the office hour times and locations.

Contact: Please use Piazza (follow the link to it within Canvas) and, whenever possible, post so that everyone can see (if you have a question, chances are other people can benefit from the answer as well!).

Course Description

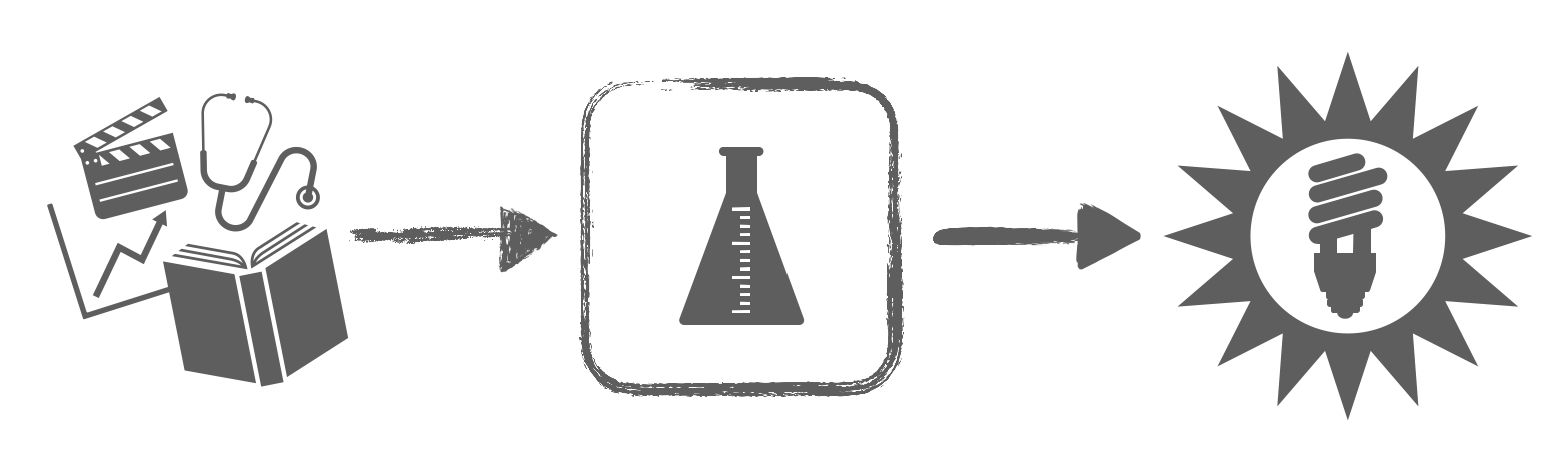

Companies, governments, and other organizations now collect massive amounts of data such as text, images, audio, and video. How do we turn this heterogeneous mess of data into actionable insights? A common problem is that we often do not know what structure underlies the data ahead of time, hence the data often being referred to as "unstructured". This course takes a practical approach to unstructured data analysis via a two-step approach:

- We first examine how to identify possible structure present in the data via visualization and other exploratory methods.

- Once we have clues for what structure is present in the data, we turn toward exploiting this structure to make predictions.

We will be coding lots of Python and dabble a bit with GPU computing (Google Colab).

Note regarding foundation models (such as Large Language Models): As likely all of you are aware, there are now technologies like (Chat)GPT, Gemini, Llama, etc which will all be getting better over time. If you use any of these in your homework, please cite them. For the purposes of the class, I will view these as external resources/collaborators. For exams, I want to make sure that you actually understand the material and are not just telling me what someone else or GPT/Gemini/etc knows. This is important so that in the future, if you use AI technologies to assist you in your data analysis, you have enough background knowledge to check for yourself whether you think the AI is giving you a solution that is correct or not. For this reason, exams this semester will explicitly not allow electronics.

Prerequisite: If you are a Heinz student, then you must have taken 95-888 "Data-Focused Python" or 90-819 "Intermediate Programming with Python". If you are not a Heinz student and would like to take the course, please contact the instructor and clearly state what Python courses you have taken/what Python experience you have.

Helpful but not required: Math at the level of calculus and linear algebra may help you appreciate some of the material more

Grading: Homework (30%), Quiz 1 (35%), Quiz 2 (35%*)

*Students with the most instructor-endorsed posts on Piazza will receive a slight bonus at the end of the mini, which will be added directly to their Quiz 2 score (a maximum of 10 bonus points, so that it is possible to get 110 out of 100 points on Quiz 2).

Letter grades are determined based on a curve.

Calendar for Sections A4/B4 (tentative)

Previous version of course (including lecture slides and demos): 95-865 Fall 2023 mini 2

| Date | Topic | Supplemental Materials |

|---|---|---|

| Part I. Exploratory data analysis | ||

| Mon Mar 11 |

Lecture 1: Course overview, analyzing text using frequencies

[slides] Please install Anaconda Python 3 and spaCy by following this tutorial (needed for HW1 and the demo next lecture): [slides] Note: Anaconda Python 3 includes support for Jupyter notebooks, which we use extensively in this class |

|

| Wed Mar 13 |

Lecture 2: Basic text analysis demo (requires Anaconda Python 3 & spaCy)

[slides] [Jupyter notebook (basic text analysis)] HW1 released (check Canvas) |

|

| Fri Mar 15 |

Recitation slot: Python review

[Jupyter notebook] |

|

| Mon Mar 18 |

Lecture 3: Wrap-up basic text analysis, co-occurrence analysis

[slides] [Jupyter notebook (basic text analysis using arrays)] [Jupyter notebook (co-occurrence analysis toy example)] |

As we saw in class, PMI is defined in terms of log probabilities. Here's additional reading that provides some intuition on log probabilities (technical):

[Section 1.2 of lecture notes from CMU 10-704 "Information Processing and Learning" Lecture 1 (Fall 2016) discusses "information content" of random outcomes, which are in terms of log probabilities] |

| Wed Mar 20 |

Lecture 4: Co-occurrence analysis (cont'd), visualizing high-dimensional data with PCA

[slides] [Jupyter notebook (text generation using n-grams)] |

Additional reading (technical):

[Abdi and Williams's PCA review] Supplemental videos: [StatQuest: PCA main ideas in only 5 minutes!!!] [StatQuest: Principal Component Analysis (PCA) Step-by-Step (note that this is a more technical introduction than mine using SVD/eigenvalues)] [StatQuest: PCA - Practical Tips] [StatQuest: PCA in Python (note that this video is more Pandas-focused whereas 95-865 is taught in a manner that is more numpy-focused to better prep for working with PyTorch later)] |

| Fri Mar 22 |

Recitation slot: More practice with coding in terms of arrays, how to save Jupyter notebooks as PDFs

[slides] |

|

| Mon Mar 25 |

Lecture 5: PCA (cont'd)

[slides] [Jupyter notebook (PCA)] HW1 due 11:59pm |

See the supplemental materials for the previous lecture |

| Wed Mar 27 |

Lecture 6: Manifold learning

[slides] [required reading: "How to Use t-SNE Effectively" (Wattenberg et al, Distill 2016)] [Jupyter notebook (manifold learning)] HW2 released (check Canvas) |

Python examples for manifold learning:

[scikit-learn example (Isomap, t-SNE, and many other methods)] Supplemental video: [StatQuest: t-SNE, clearly explained] Additional reading (technical): [The original Isomap paper (Tenenbaum et al 2000)] [some technical slides on t-SNE by George for 95-865] [Simon Carbonnelle's much more technical t-SNE slides] [t-SNE webpage] |

| Fri Mar 29 |

Recitation slot: More on PCA and manifold learning

[slides] [Jupyter notebook (more on PCA, argsort)] [Jupyter notebook (PCA and t-SNE with images)***] ***For the demo on t-SNE with images to work, you will need to install some packages: pip install torch torchvision

|

|

| Mon Apr 1 |

Lecture 7: Clustering

[slides] [Jupyter notebook (dimensionality reduction and clustering with drug data)] |

Clustering additional reading (technical):

[see Section 14.3 of the book "Elements of Statistical Learning" on clustering] Supplemental video: [StatQuest: K-means clustering (note: the elbow method is specific to using total variation (i.e., residual sum of squares) as a score function; the elbow method is *not* always the approach you should use with other score functions (i.e., CH index was not designed to be used with the elbow method))] |

| Wed Apr 3 |

Lecture 8: Clustering (cont'd)

[slides] We continue using the same demo from last time: [Jupyter notebook (dimensionality reduction and clustering with drug data)] |

|

| Fri Apr 5 | Recitation slot: Quiz 1 (80-minute exam) — material coverage is up to and including last Friday's (March 29) recitation | |

| Mon Apr 8 |

Lecture 9: Wrap up clustering, topic modeling

[slides] [required reading: Jupyter notebook (clustering with images)] [Jupyter notebook (topic modeling with LDA)] |

Topic modeling reading:

[David Blei's general intro to topic modeling] [Maria Antoniak's practical guide for using LDA] |

| Part II. Predictive data analysis | ||

| Wed Apr 10 |

Lecture 10: Wrap up topic modeling, intro to predictive data analysis

[slides] [Jupyter notebook (prediction and model validation)] |

|

| Fri Apr 12 | No class (CMU Spring Carnival) | |

| Mon Apr 15 |

Lecture 11: Intro to neural nets & deep learning

[slides] We continue using the demo from last lecture HW2 due 11:59pm |

PyTorch tutorial (at the very least, go over the first page of this tutorial to familiarize yourself with going between NumPy arrays and PyTorch tensors, and also understand the basic explanation of how tensors can reside on either the CPU or a GPU): [PyTorch tutorial] Additional reading: [Chapter 1 "Using neural nets to recognize handwritten digits" of the book Neural Networks and Deep Learning] Video introduction on neural nets: ["But what *is* a neural network? | Chapter 1, deep learning" by 3Blue1Brown] StatQuest series of videos on neural nets and deep learning: [YouTube playlist (note: there are a lot of videos in this playlist, some of which goes into more detail than you're expected to know for 95-865; make sure that you understand concepts at the level of how they are presented in lectures/recitations)] |

| Wed Apr 17 |

Lecture 12: Wrap up neural net basics; image analysis with convolutional neural nets (also called CNNs or convnets)

[slides] For the neural net demo below to work, you will need to install some packages: pip install torch torchvision torchaudio torchtext torchinfo

[Jupyter notebook (handwritten digit recognition with neural nets; be sure to scroll to the bottom to download UDA_pytorch_utils.py)] |

Additional reading:

[Stanford CS231n Convolutional Neural Networks for Visual Recognition] [(technical) Richard Zhang's fix for max pooling] Also check the StatQuest neural net and deep learning YouTube playlist (in supplemental materials for Lecture 11; there's a video in the playlist on CNNs) |

| Fri Apr 19 | Recitation slot: More details on hyperparameter tuning, model evaluation, neural network training

[slides] [Jupyter notebook] |

|

| Mon Apr 22 |

Lecture 13: Time series analysis with recurrent neural nets (RNNs)

[slides] [Jupyter notebook (sentiment analysis with IMDb reviews; requires UDA_pytorch_utils.py from the previous lecture's demo)] |

Also check the StatQuest neural net and deep learning YouTube playlist (in supplemental materials for Lecture 11; there's a video in the playlist on RNNs) |

| Wed Apr 24 |

Lecture 14: Text generation with RNNs and generative pre-trained transformers (GPTs); course wrap-up

[slides] [Jupyter notebook (text generation with neural nets)] |

Additional reading/videos:

[Andrej Karpathy's "Neural Networks: Zero to Hero" lecture series (including a more detailed GPT lecture)] [A tutorial on BERT word embeddings] Software for explaining neural nets: [Captum] Some articles on being careful with explanation methods (technical): ["The Disagreement Problem in Explainable Machine Learning: A Practitioner's Perspective" (Krishna et al 2022)] ["Do Feature Attribution Methods Correctly Attribute Features?" (Zhou et al 2022)] ["The false hope of current approaches to explainable artificial intelligence in health care" (Ghassemi et al 2021)] |

| Fri Apr 26 | Recitation slot: More details on RNNs, transformers, some other deep learning topics | |

| Mon Apr 29 | HW3 due 11:59pm | |

| Final exam week | Quiz 2 (80-minute exam): Friday May 3, 1pm-2:20pm HBH A301 | |